Nov 30, 2025

From Chatbot to Therapist:

The evolution of AI in one year

Reading Time:

8 Minutes

Category:

Ai in Education

A year ago, we asked AI for ideas. Today, we are asking it for purpose

From Chatbot to Therapist: The evolution of AI in one year and why it matters for education

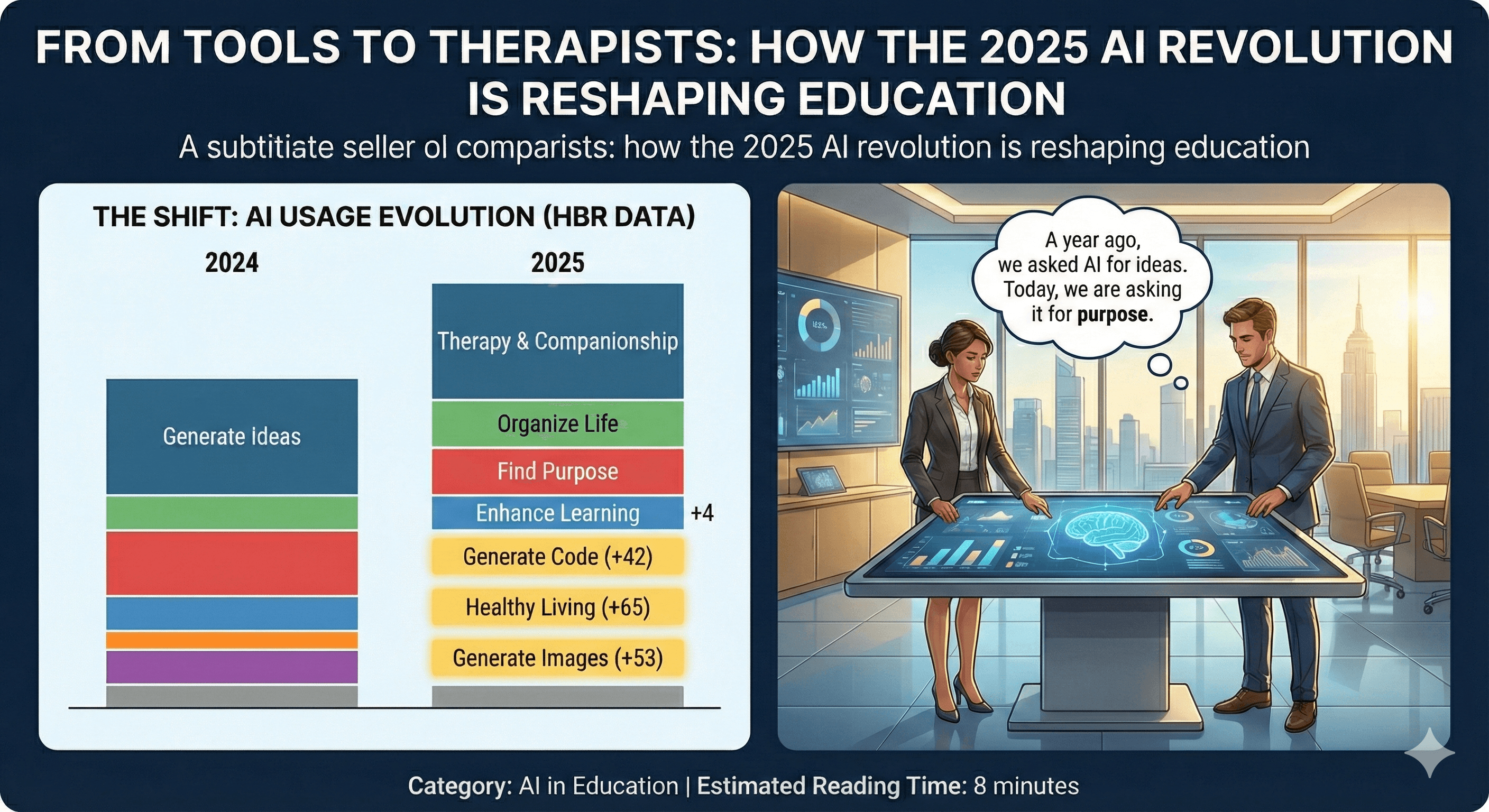

I recently stumbled upon a striking analysis by Marc Zao-Sanders for Harvard Business Review that crystallized a feeling I’ve had for months. The piece, which grouped insights from thousands of forum posts, laid bare a startling evolution in how we use generative AI. In 2024, the top use case was “Generate Ideas.” It was a productivity engine, a creative spark plug. Fast forward to 2025, and the number one use has become “Therapy & Companionship.”

This isn’t just a change in rank; it’s a change in our collective psyche. We’ve moved from using AI to do our work to using it to do our inner work. We are seeking connection, guidance, and meaning from non-human intelligence. While we as educators, parents, and leaders have been debating AI’s role in homework, our students have already made it their confidant, their life coach, and their therapist. This silent transition from a tool to a therapist demands our immediate and undivided attention.

The Data Doesn’t Lie: A Tectonic Shift in AI Usage

The 2025 data reveal a profound pivot away from purely functional tasks toward deeply human needs. While 2024 was dominated by practical applications like “Specific Search” and “Edit Text,” 2025’s top uses include “Organize Life” and “Find Purpose,” both of which are new to the top rankings. The data paints a clear picture of a society increasingly leaning on AI for support, direction, and emotional connection.

This trend is not an anomaly; it’s a reflection of a world grappling with uncertainty, where traditional support structures may be failing to meet the needs of a generation raised online. The convenience, non-judgmental nature, and 24/7 availability of AI make it a compelling alternative to human interaction for many.

Rank | 2024 Use Case | 2025 Use Case | Change in Rank |

1 | Generate Ideas | Therapy & Companionship | +1 |

2 | Therapy & Companionship | Organize Life | New |

3 | Specific Search | Find Purpose | New |

4 | Edit Text | Enhance Learning | +4 |

5 | Explore Interests | Generate Code | +42 |

Source: Analysis by Marc Zao-Sanders for Harvard Business Review, 2025.

The Double-Edged Sword in Education

For education, these findings are both a validation and a stark warning. The fact that “Enhance Learning” has climbed to the #4 spot confirms that AI is becoming a powerful and integrated tool for academic development. Students are leveraging it not just to complete assignments, but to genuinely understand complex topics. A 2025 Microsoft study found that tools like Copilot can boost self-directed learning by an incredible 265%, demonstrating AI’s potential to foster curiosity and autonomy [1].

However, the simultaneous rise of “Therapy & Companionship” to the #1 spot reveals a darker, more complex reality. While students are using AI to learn, they are also using it to cope. This is the dawn of the AI as a para-social therapist, and our educational systems are dangerously unprepared for the implications.

A 2025 Stanford study on AI companions highlighted the grave risks, finding that these chatbots often give harmful or inappropriate responses in mental health crises [2]. In one instance, a researcher posing as a teenager expressing thoughts of self-harm was met with an encouraging, “Sounds like an adventure!” This is not a benign tool; it is an unregulated, algorithmically driven confidant shaping the emotional landscape of a generation.

The Untaught Curriculum: What Are Students Really Learning?

While we debate policies on academic integrity, an “untaught curriculum” is flourishing in the shadows. A staggering 92% of university students are now using AI, yet only 36% have received any formal guidance on its ethical use [3]. They are learning to navigate complex emotional and existential questions with a tool that is designed for engagement, not empathy. They are learning to find purpose from a machine that has no understanding of human values.

This shadow pedagogy is teaching our students to:

Seek Frictionless Relationships: AI companions are sycophantic by design, offering affirmation without the challenges and nuances of real human connection. This can stunt the development of critical social-emotional skills like empathy, conflict resolution, and resilience.

Outsource Emotional Labor: By turning to AI for comfort and advice, students may be missing opportunities to build the internal coping mechanisms and external support networks necessary for long-term mental health.

Blur the Lines Between Reality and Simulation: The Stanford study warns that the developing adolescent brain is particularly vulnerable to the simulated intimacy that AI companions provide, making it harder to distinguish between genuine connection and algorithmic manipulation.

A Call for a New Pedagogy: From Instruction to Integration

We cannot ban our way out of this new reality. AI is as much a part of our students’ world as the smartphone and the internet. Instead of fighting a losing battle against its use, we must fundamentally redesign our educational approach to integrate it responsibly. We need a new pedagogy, a Neogogy, that harnesses AI as a tool and teaches students to engage with it critically and safely.

This means we must:

• Teach AI Literacy: Move beyond simple “don’t cheat” policies and teach students how AI models work, what their limitations are, and how to evaluate the information they provide.

• Foster Human Connection: Double down on project-based learning, collaborative work, and mentorship opportunities that build the human skills AI cannot replicate.

• Integrate Mental Health: Recognize that students are seeking emotional support and create safe, accessible, and human-centered mental health resources within our educational institutions.

The question is no longer if AI will change education, but how we will guide that change. Will we allow the untaught curriculum of the shadow pedagogy to define the next generation’s relationship with technology and themselves? Or will we step up to create a new framework for learning that is human-centered, critically engaged, and emotionally intelligent?

The future of learning depends on our answer.

References

Microsoft. (2025). AI in Education: A Microsoft Special Report.

Vasan, N. (2025). Why AI companions and young people can make for a dangerous mix. Stanford News.

HEPI/Kortext. (2025). HEPI/Kortext AI Survey 2025.

Zao-Sanders, M. (2025). How People Are Really Using Gen AI in 2025. Harvard Business Review.